This mathematics problem involves applying core mathematical principles and formulas. Below you will find a complete step-by-step solution with detailed explanations for each step, helping you understand not just the answer but the method behind it.

ScanSolve AI Answer

Answer

Proven

Here are the solutions to the problems.

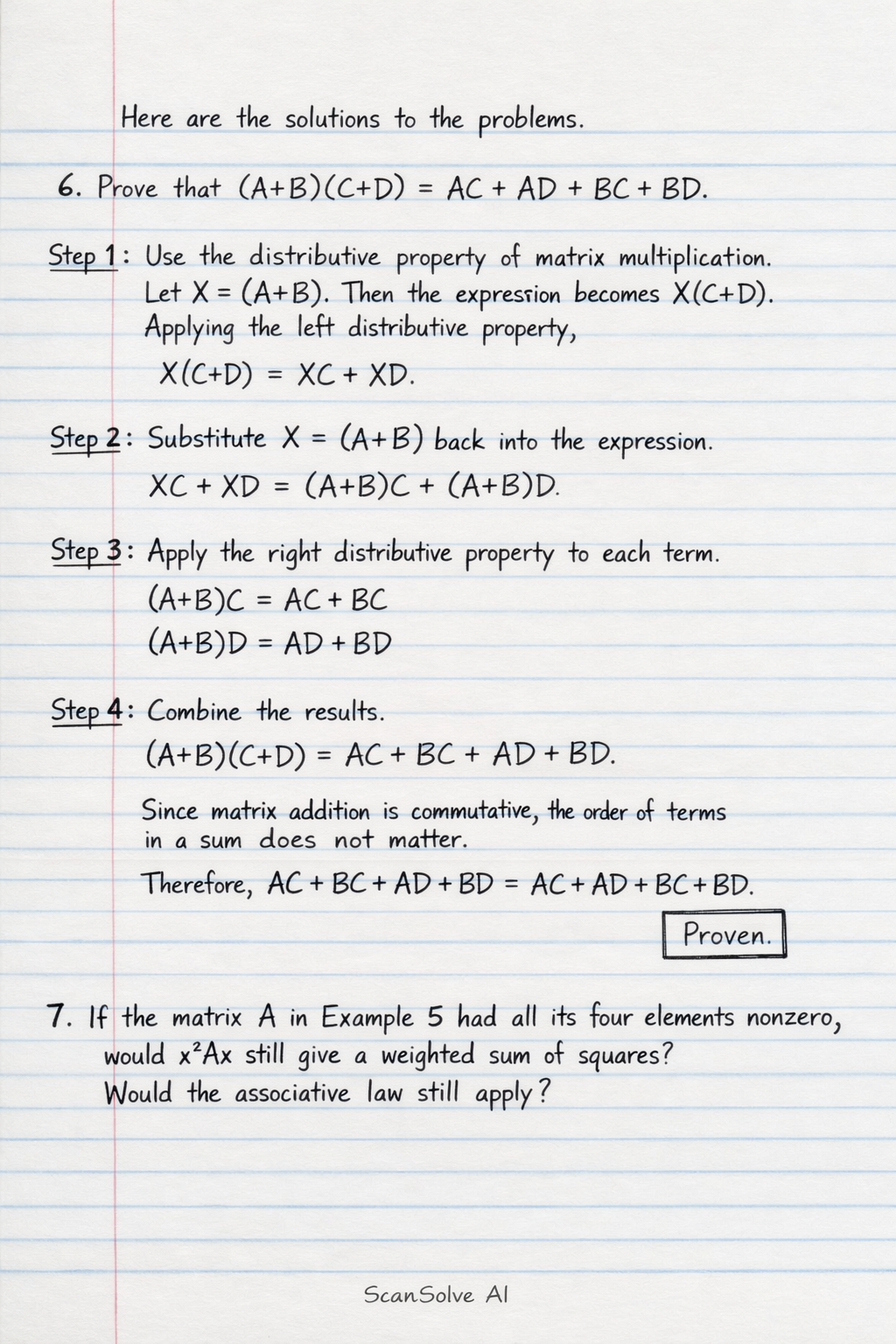

6. Prove that .

Step 1: Use the distributive property of matrix multiplication. Let . Then the expression becomes . Applying the left distributive property, .

Step 2: Substitute back into the expression.

Step 3: Apply the right distributive property to each term.

Step 4: Combine the results. Since matrix addition is commutative, the order of terms in a sum does not matter. Therefore, .

7. If the matrix A in Example 5 had all its four elements nonzero, would still give a weighted sum of squares? Would the associative law still apply?

To answer this, we assume is a column vector, and is a matrix. Let and . Then .

Would still give a weighted sum of squares? If all four elements of are nonzero, then and are nonzero. This means the term will generally be present (unless ). A "weighted sum of squares" typically refers to a sum of terms of the form , without cross-product terms like . Therefore, if is not a diagonal matrix (i.e., or are nonzero), would be a quadratic form but not strictly a "weighted sum of squares" in the sense of only squared terms. ❌ No, it would generally be a quadratic form with cross-product terms, not solely a weighted sum of squares.

Would the associative law still apply? The associative law of matrix multiplication, , applies whenever the matrix dimensions are compatible for multiplication. The values of the elements (zero or nonzero) do not affect the validity of the associative law itself. ✅ Yes, the associative law of matrix multiplication always applies when the dimensions are compatible.

8. Name some situations or contexts where the notion of a weighted or unweighted sum of squares may be relevant.

Get instant step-by-step solutions to any question. Free to start.

Ask Your Question

Handwritten Step-by-Step Solution

Get this as a handwritten image on WhatsApp — like a tutor wrote it for you.

Try on WhatsAppStill have questions?

Use the distributive property of matrix multiplication. Let X = (A+B).

This mathematics problem involves applying core mathematical principles and formulas. Below you will find a complete step-by-step solution with detailed explanations for each step, helping you understand not just the answer but the method behind it.